Introduction

Chatbots have turn out to be an integral a part of trendy functions, offering customers with interactive and interesting experiences. On this information, we’ll create a chatbot utilizing LangChain, a robust framework that simplifies the method of working with massive language fashions. Our chatbot may have the next key options:

- Dialog reminiscence to keep up context

- Customizable system prompts

- Capacity to view and clear chat historical past

- Response time measurement

By the tip of this text, you’ll have a totally purposeful chatbot that you could additional customise and combine into your individual initiatives. Whether or not you’re new to LangChain or trying to develop your AI utility improvement expertise, this information will give you a strong basis for creating clever, contextaware chatbots.

Overview

- Grasp the elemental ideas and options of LangChain, together with chaining, reminiscence, prompts, brokers, and integration.

- Set up and configure Python 3.7+ and crucial libraries to construct and run a LangChain-based chatbot.

- Modify system prompts, reminiscence settings, and temperature parameters to tailor the chatbot’s conduct and capabilities.

- Combine error dealing with, logging, person enter validation, and dialog state administration for strong and scalable chatbot functions.

Conditions

Earlier than diving into this text, it’s best to have:

- Python Data: Intermediate understanding of Python programming.

- API Fundamentals: Familiarity with API ideas and utilization.

- Atmosphere Setup: Capacity to arrange a Python surroundings and set up packages.

- OpenAI Account: An account with OpenAI to acquire an API key.

Technical Necessities

To comply with together with this tutorial, you’ll want:

- Python 3.7+: Our code is appropriate with Python 3.7 or later variations.

- pip: The Python package deal installer, to put in required libraries.

- OpenAI API Key: You’ll want to join an OpenAI account and acquire an API key.

- Textual content Editor or IDE: Any textual content editor or built-in improvement surroundings of your alternative.

What’s LangChain?

LangChain is an opensource framework designed to simplify the event of functions utilizing massive language fashions (LLMs). It gives a set of instruments and abstractions that make it simpler to construct complicated, contextaware functions powered by AI. Some key options of LangChain embody:

- Chaining: Simply mix a number of parts to create complicated workflows.

- Reminiscence: Implement numerous forms of reminiscence to keep up context in conversations.

- Prompts: Handle and optimize prompts for various use circumstances.

- Brokers: Create AI brokers that may use instruments and make selections.

- Integration: Join with exterior knowledge sources and APIs.

Now that we’ve coated what LangChain is, how we’ll use it, and the conditions for this tutorial, let’s transfer on to establishing our improvement surroundings.

Setting-up the Atmosphere

Earlier than we dive into the code, let’s arrange our improvement surroundings. We’ll want to put in a number of dependencies to get our chatbot up and working.

First, be sure to have Python 3.7 or later put in in your system. Then, create a brand new listing to your undertaking and arrange a digital surroundings:

Terminal:

mkdir langchainchatbot

cd langchainchatbot

python m venv venv

supply venv/bin/activateNow, set up the required dependencies:

pip set up langchain openai pythondotenv coloramaSubsequent, create a .env file in your undertaking listing to retailer your OpenAI API key:

OPENAI_API_KEY=your_api_key_hereSubstitute your_api_key_here together with your precise OpenAI API key.

Understanding the Code Construction

Our chatbot implementation consists of a number of key parts:

- Import statements for required libraries and modules

- Utility capabilities for formatting messages and retrieving chat historical past

- The principle run_chatgpt_chatbot operate that units up and runs the chatbot

- A conditional block to run the chatbot when the script is executed immediately

Let’s break down every of those parts intimately.

Implementing the Chatbot

Allow us to now look into the steps to implement chatbot.

Step1: Importing Dependencies

First, let’s import the mandatory modules and libraries:

import time

from typing import Checklist, Tuple

import sys

from colorama import Fore, Fashion, init

from langchain.chat_models import ChatOpenAI

from langchain.prompts import ChatPromptTemplate, MessagesPlaceholder

from langchain.reminiscence import ConversationBufferWindowMemory

from langchain.schema import SystemMessage, HumanMessage, AIMessage

from langchain.schema.runnable import RunnablePassthrough, RunnableLambda

from operator import itemgetterThese imports present us with the instruments we have to create our chatbot:

- time: For measuring response time

- typing: For sort hinting

- sys: For systemrelated operations

- colorama: For including shade to our console output

- langchain: Varied modules for constructing our chatbot

- operator: For the itemgetter operate

Step2: Outline Utility Capabilities

Subsequent, let’s outline some utility capabilities that may assist us handle our chatbot:

def format_message(function: str, content material: str) > str:

return f"{function.capitalize()}: {content material}"

def get_chat_history(reminiscence) > Checklist[Tuple[str, str]]:

return [(msg.type, msg.content) for msg in memory.chat_memory.messages]

def print_typing_effect(textual content: str, delay: float = 0.03):

for char in textual content:

sys.stdout.write(char)

sys.stdout.flush()

time.sleep(delay)

print()- format_message: Codecs a message with the function (e.g., “Person” or “AI”) and content material

- get_chat_history: Retrieves the chat historical past from the reminiscence object

- print_typing_effect: Creates a typing impact when printing textual content to the console

Step3: Defining Foremost Chatbot Operate

The center of our chatbot is the run_chatgpt_chatbot operate. Let’s break it down into smaller sections:

def run_chatgpt_chatbot(system_prompt="", history_window=30, temperature=0.3):

#Initialize the ChatOpenAI mannequin

mannequin = ChatOpenAI(model_name="gpt3.5turbo", temperature=temperature)

Set the system immediate

if system_prompt:

SYS_PROMPT = system_prompt

else:

SYS_PROMPT = "Act as a useful AI Assistant"

Create the chat immediate template

immediate = ChatPromptTemplate.from_messages(

[

('system', SYS_PROMPT),

MessagesPlaceholder(variable_name="history"),

('human', '{input}')

]

)This operate does the next:

- Initializes the ChatOpenAI mannequin with the desired temperature.

- Units up the system immediate and chat immediate template.

- Creates a dialog reminiscence with the desired historical past window.

- Units up the dialog chain utilizing LangChain’s RunnablePassthrough and RunnableLambda.

- Enters a loop to deal with person enter and generate responses.

- Processes particular instructions like ‘STOP’, ‘HISTORY’, and ‘CLEAR’.

- Measures response time and shows it to the person.

- Saves the dialog context in reminiscence.

Step5: Operating the Chatbot

Lastly, we add a conditional block to run the chatbot when the script is executed immediately:

if __name__ == "__main__":

run_chatgpt_chatbot()This permits us to run the chatbot by merely executing the Python script.

Superior Options

Our chatbot implementation contains a number of superior options that improve its performance and person expertise.

Chat Historical past

Customers can view the chat historical past by typing ‘HISTORY’. This characteristic leverages the ConversationBufferWindowMemory to retailer and retrieve previous messages:

elif user_input.strip().higher() == 'HISTORY':

chat_history = get_chat_history(reminiscence)

print("n Chat Historical past ")

for function, content material in chat_history:

print(format_message(function, content material))

print(" Finish of Historical past n")

proceedClearing Dialog Reminiscence

Customers can clear the dialog reminiscence by typing ‘CLEAR’. This resets the context and permits for a contemporary begin:

elif user_input.strip().higher() == 'CLEAR':

reminiscence.clear()

print_typing_effect('ChatGPT: Chat historical past has been cleared.')

proceedResponse Time Measurement

The chatbot measures and shows the response time for every interplay, giving customers an thought of how lengthy it takes to generate a reply:

start_time = time.time()

reply = conversation_chain.invoke(user_inp)

end_time = time.time()

response_time = end_time start_time

print(f"(Response generated in {response_time:.2f} seconds)")Customization Choices

Our chatbot implementation provides a number of customization choices:

- System Immediate: You’ll be able to present a customized system immediate to set the chatbot’s conduct and character.

- Historical past Window: Alter the history_window parameter to regulate what number of previous messages the chatbot remembers.

- Temperature: Modify the temperature parameter to regulate the randomness of the chatbot’s responses.

To customise these choices, you’ll be able to modify the operate name within the if __name__ == “__main__”: block:

if __name__ == "__main__":

run_chatgpt_chatbot(

system_prompt="You're a pleasant and educated AI assistant specializing in expertise.",

history_window=50,

temperature=0.7

)

import time

from typing import Checklist, Tuple

import sys

import time

from colorama import Fore, Fashion, init

from langchain.chat_models import ChatOpenAI

from langchain.prompts import ChatPromptTemplate, MessagesPlaceholder

from langchain.reminiscence import ConversationBufferWindowMemory

from langchain.schema import SystemMessage, HumanMessage, AIMessage

from langchain.schema.runnable import RunnablePassthrough, RunnableLambda

from operator import itemgetter

def format_message(function: str, content material: str) -> str:

return f"{function.capitalize()}: {content material}"

def get_chat_history(reminiscence) -> Checklist[Tuple[str, str]]:

return [(msg.type, msg.content) for msg in memory.chat_memory.messages]

def run_chatgpt_chatbot(system_prompt="", history_window=30, temperature=0.3):

mannequin = ChatOpenAI(model_name="gpt-3.5-turbo", temperature=temperature)

if system_prompt:

SYS_PROMPT = system_prompt

else:

SYS_PROMPT = "Act as a useful AI Assistant"

immediate = ChatPromptTemplate.from_messages(

[

('system', SYS_PROMPT),

MessagesPlaceholder(variable_name="history"),

('human', '{input}')

]

)

reminiscence = ConversationBufferWindowMemory(ok=history_window, return_messages=True)

conversation_chain = (

RunnablePassthrough.assign(

historical past=RunnableLambda(reminiscence.load_memory_variables) | itemgetter('historical past')

)

| immediate

| mannequin

)

print_typing_effect("Hi there, I'm your pleasant chatbot. Let's chat!")

print("Kind 'STOP' to finish the dialog, 'HISTORY' to view chat historical past, or 'CLEAR' to clear the chat historical past.")

whereas True:

user_input = enter('Person: ')

if user_input.strip().higher() == 'STOP':

print_typing_effect('ChatGPT: Goodbye! It was a pleasure chatting with you.')

break

elif user_input.strip().higher() == 'HISTORY':

chat_history = get_chat_history(reminiscence)

print("n--- Chat Historical past ---")

for function, content material in chat_history:

print(format_message(function, content material))

print("--- Finish of Historical past ---n")

proceed

elif user_input.strip().higher() == 'CLEAR':

reminiscence.clear()

print_typing_effect('ChatGPT: Chat historical past has been cleared.')

proceed

user_inp = {'enter': user_input}

start_time = time.time()

reply = conversation_chain.invoke(user_inp)

end_time = time.time()

response_time = end_time - start_time

print(f"(Response generated in {response_time:.2f} seconds)")

print_typing_effect(f'ChatGPT: {reply.content material}')

reminiscence.save_context(user_inp, {'output': reply.content material})

if __name__ == "__main__":

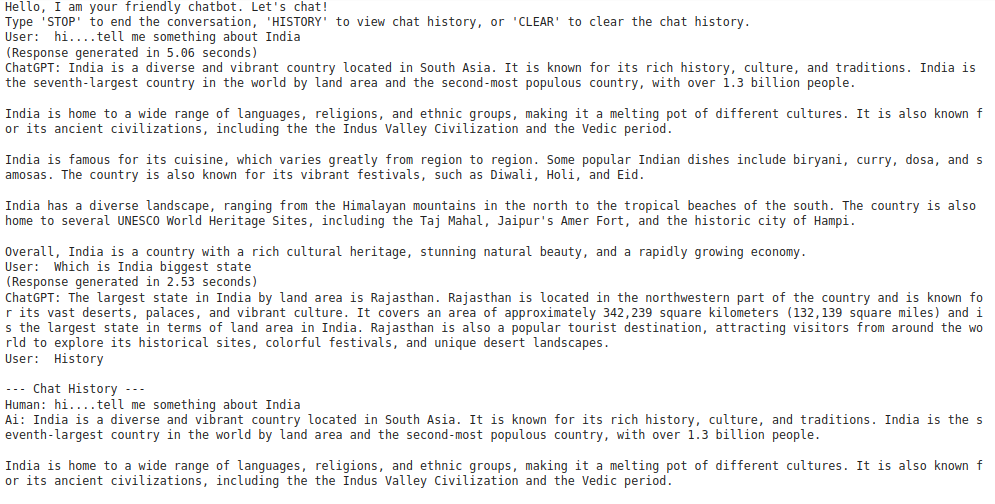

run_chatgpt_chatbot()Output:

Greatest Practices and Suggestions

When working with this chatbot implementation, think about the next greatest practices and ideas:

- API Key Safety: All the time retailer your OpenAI API key in an surroundings variable or a safe configuration file. By no means hardcode it in your script.

- Error Dealing with: Add tryexcept blocks to deal with potential errors, corresponding to community points or API charge limits.

- Logging: Implement logging to trace conversations and errors for debugging and evaluation.

- Person Enter Validation: Add extra strong enter validation to deal with edge circumstances and forestall potential points.

- Dialog State: Think about implementing a approach to save and cargo dialog states, permitting customers to renew chats later.

- Fee Limiting: Implement charge limiting to forestall extreme API calls and handle prices.

- Multiturn Conversations: Experiment with completely different reminiscence sorts in LangChain to deal with extra complicated, multiturn conversations.

- Mannequin Choice: Strive completely different OpenAI fashions (e.g., GPT4) to see how they have an effect on the chatbot’s efficiency and capabilities.

Conclusion

On this complete information, we’ve constructed a robust chatbot utilizing LangChain and OpenAI’s GPT3.5turbo This chatbot serves as a strong basis for extra complicated functions. You’ll be able to prolong its performance by including options like:

- Integration with exterior APIs for realtime knowledge

- Pure language processing for intent recognition

- Multimodal interactions (e.g., dealing with photographs or audio)

- Person authentication and personalization

By leveraging the facility of LangChain and enormous language fashions, you’ll be able to create subtle conversational AI functions that present worth to customers throughout numerous domains. Keep in mind to all the time think about moral implications when deploying AIpowered chatbots, and be certain that your implementation adheres to OpenAI’s utilization pointers and your native laws concerning AI and knowledge privateness.

With this basis, you’re wellequipped to discover the thrilling world of conversational AI and create revolutionary functions that push the boundaries of human laptop interplay.

Often Requested Questions

A. You must have an intermediate understanding of Python programming, familiarity with API ideas, the power to arrange a Python surroundings and set up packages, and an OpenAI account to acquire an API key.

A. You want Python 3.7 or later, pip (Python package deal installer), an OpenAI API key, and a textual content editor or built-in improvement surroundings (IDE) of your alternative.

A. The important thing parts are import statements for required libraries, utility capabilities for formatting messages and retrieving chat historical past, the principle operate (run_chatgpt_chatbot) that units up and runs the chatbot, and a conditional block to execute the chatbot script immediately.