Introduction

Cohere launched its next-generation basis mannequin, Rerank 3 for environment friendly Enterprise Search and Retrieval Augmented Technology(RAG). The Rerank mannequin is appropriate with any sort of database or search index and may also be built-in into any authorized software with native search capabilities. You gained’t think about, {that a} single line of code can enhance the search efficiency or scale back the cost of working an RAG software with negligible impression on latency.

Let’s discover how this basis mannequin is about to advance enterprise search and RAG methods, with enhanced accuracy and effectivity.

Capabilities of Rerank

Rerank provides the perfect capabilities for enterprise search which embrace the next:

- 4K context size which considerably enhances the search high quality for longer-form paperwork.

- It could search over multi-aspect and semi-structured knowledge like tables, code, JSON paperwork, invoices, and emails.

- It could cowl greater than 100 languages.

- Enhanced latency and decreased complete price of possession(TCO)

Generative AI fashions with lengthy contexts have the potential to execute an RAG. As a way to improve the accuracy rating, latency, and price the RAG resolution should require a mixture of era AI fashions and naturally Rerank mannequin. The excessive precision semantic reranking of rerank3 makes certain that solely the related data is fed to the era mannequin which will increase response accuracy and retains the latency and price very low, particularly when retrieving the data from hundreds of thousands of paperwork.

Enhanced Enterprise Search

Enterprise knowledge is usually very advanced and the present methods which might be positioned within the group encounter difficulties looking by way of multi-aspect and semi-structured knowledge sources. Majorly, within the group essentially the most helpful knowledge are usually not within the easy doc format akin to JSON is quite common throughout enterprise purposes. Rerank 3 is definitely in a position to rank advanced, multi-aspect akin to emails based mostly on all od their related metadata fields, together with their recency.

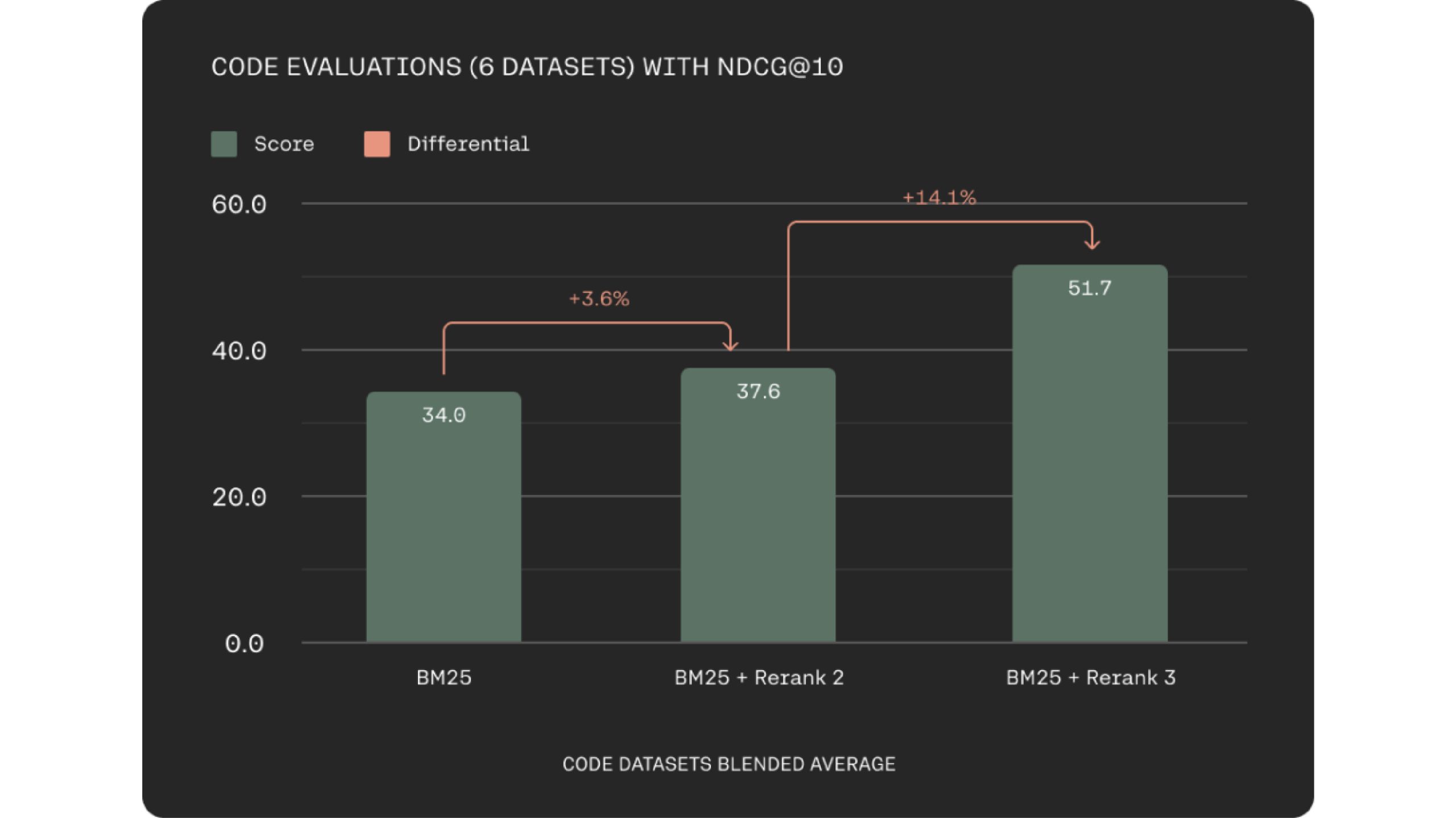

Rerank 3 considerably improves how properly it retrieves code. This will enhance engineer productiveness by serving to them discover the best code snippets sooner, whether or not inside their firm’s codebase or throughout huge documentation repositories.

Tech giants additionally cope with multilingual knowledge sources and beforehand multilingual retrieval has been the most important problem with keyword-based strategies. The Rerank 3 fashions provide a robust multilingual efficiency with over 100+ languages simplifying the retrieval course of for non-English talking prospects.

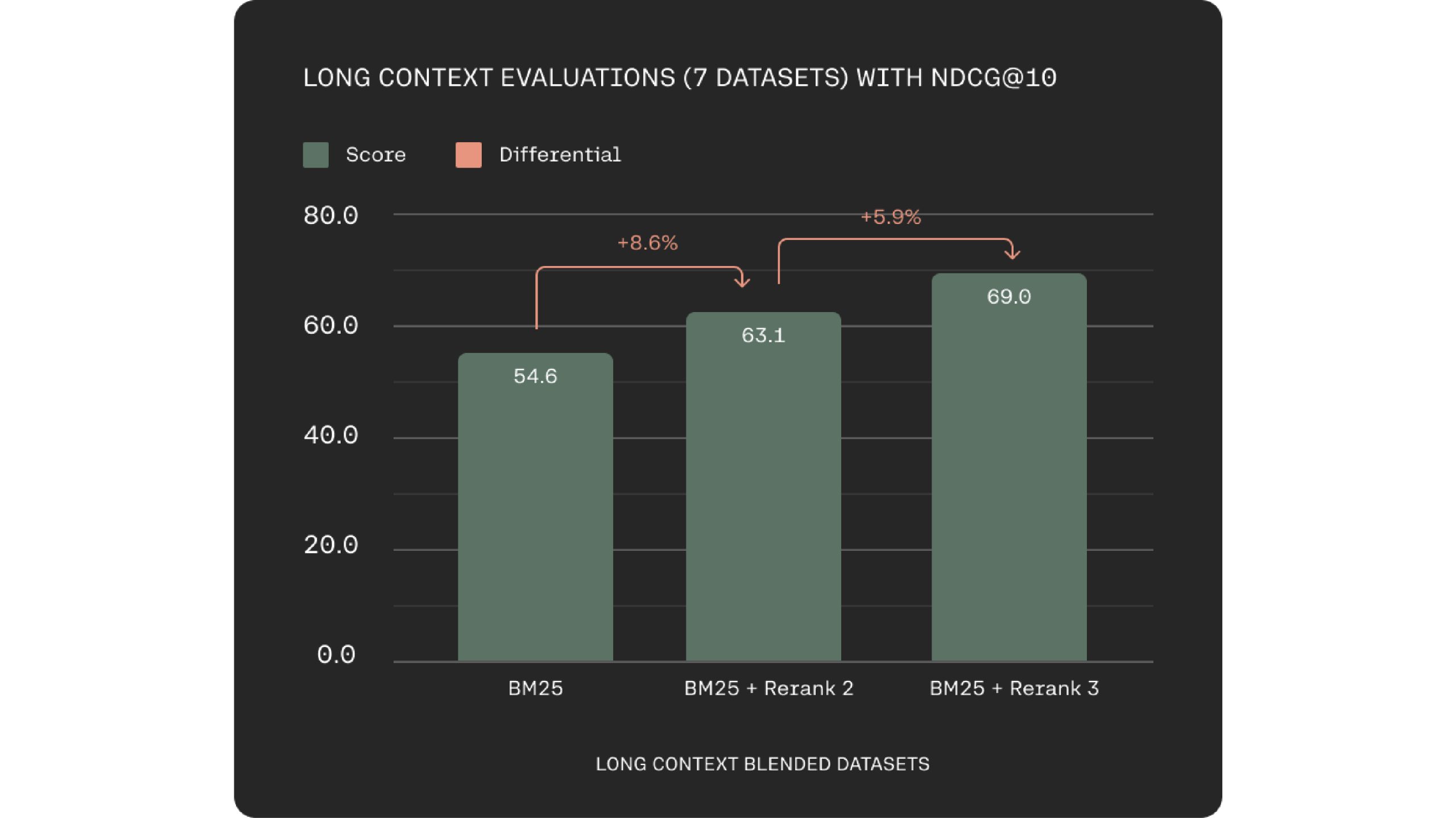

A key problem in semantic search and RAG methods is knowledge chunking optimization. Rerank 3 addresses this with a 4k context window, enabling direct processing of bigger paperwork. This results in improved context consideration throughout relevance scoring.

Rerank 3 is supported in Elastic’s Inference API additionally. Elastic search has a broadly adopted search know-how and the key phrase and vector search capabilities within the Elasticsearch platform are constructed to deal with bigger and extra advanced enterprise knowledge effectively.

“We’re excited to be partnered with Cohere to assist companies to unlock the potential of their knowledge” stated Matt Riley, GVP and GM of Elasticsearch. Cohere’s superior retrieval fashions that are Embed 3 and Rerank 3 provide a superb efficiency on advanced and huge enterprise knowledge. They’re your drawback solver, these have gotten important parts in any enterprise search system.

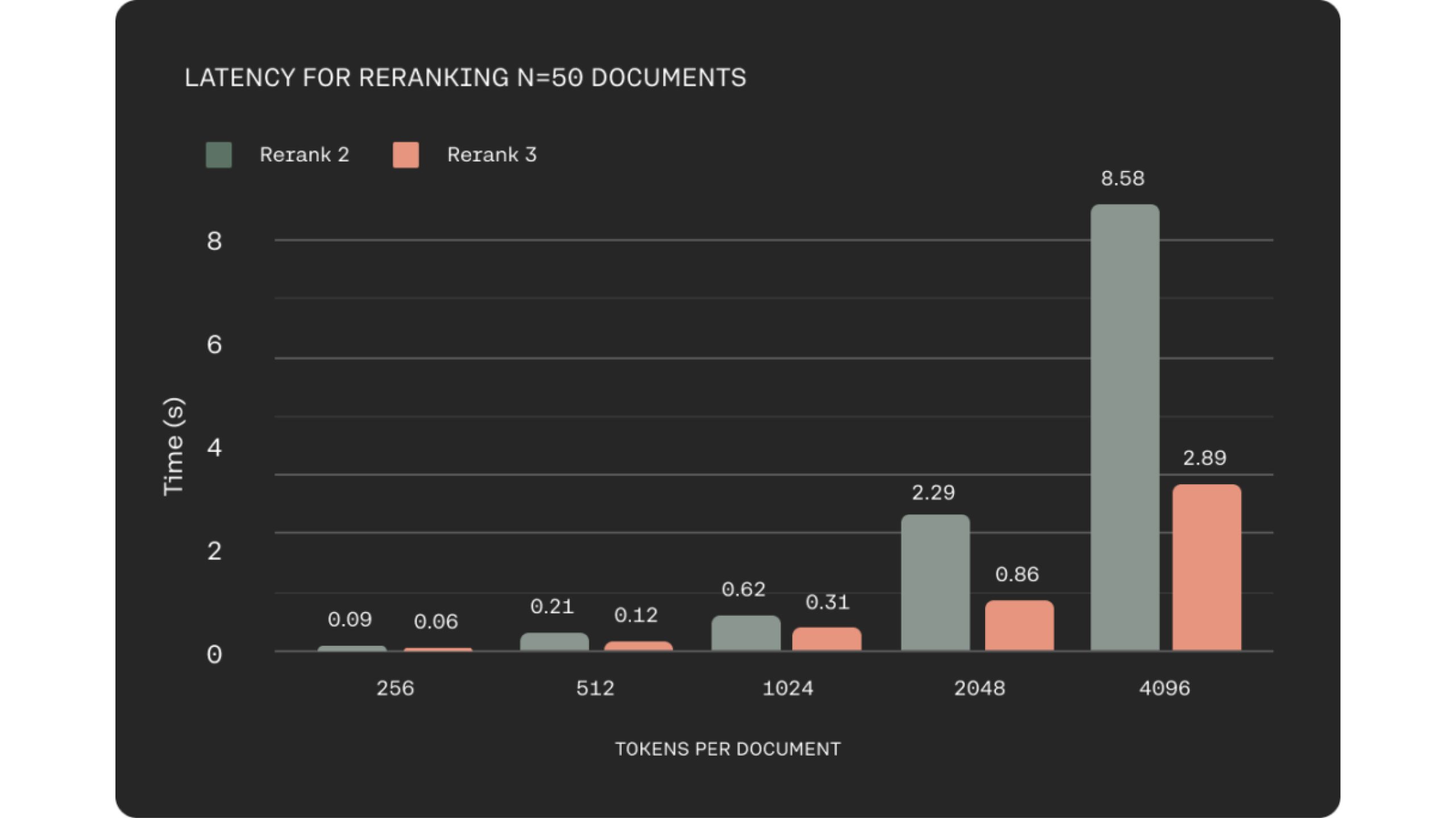

Improved Latency with Longer Context

In lots of enterprise domains akin to e-commerce or customer support, low latency is essential to delivering a top quality expertise. They stored this in thoughts whereas constructing Rerank 3, which reveals as much as 2x decrease latency in comparison with Rerank 2 for shorter doc lengths and as much as 3x enhancements at lengthy context lengths.

Higher Performace and Environment friendly RAG

In Retrieval-Augmented Technology (RAG) methods, the doc retrieval stage is crucial for total efficiency. Rerank 3 addresses two important components for distinctive RAG efficiency: response high quality and latency. The mannequin excels at pinpointing essentially the most related paperwork to a person’s question by way of its semantic reranking capabilities.

This focused retrieval course of straight improves the accuracy of the RAG system’s responses. By enabling environment friendly retrieval of pertinent data from massive datasets, Rerank 3 empowers massive enterprises to unlock the worth of their proprietary knowledge. This facilitates varied enterprise capabilities, together with buyer assist, authorized, HR, and finance, by offering them with essentially the most related data to deal with person queries.

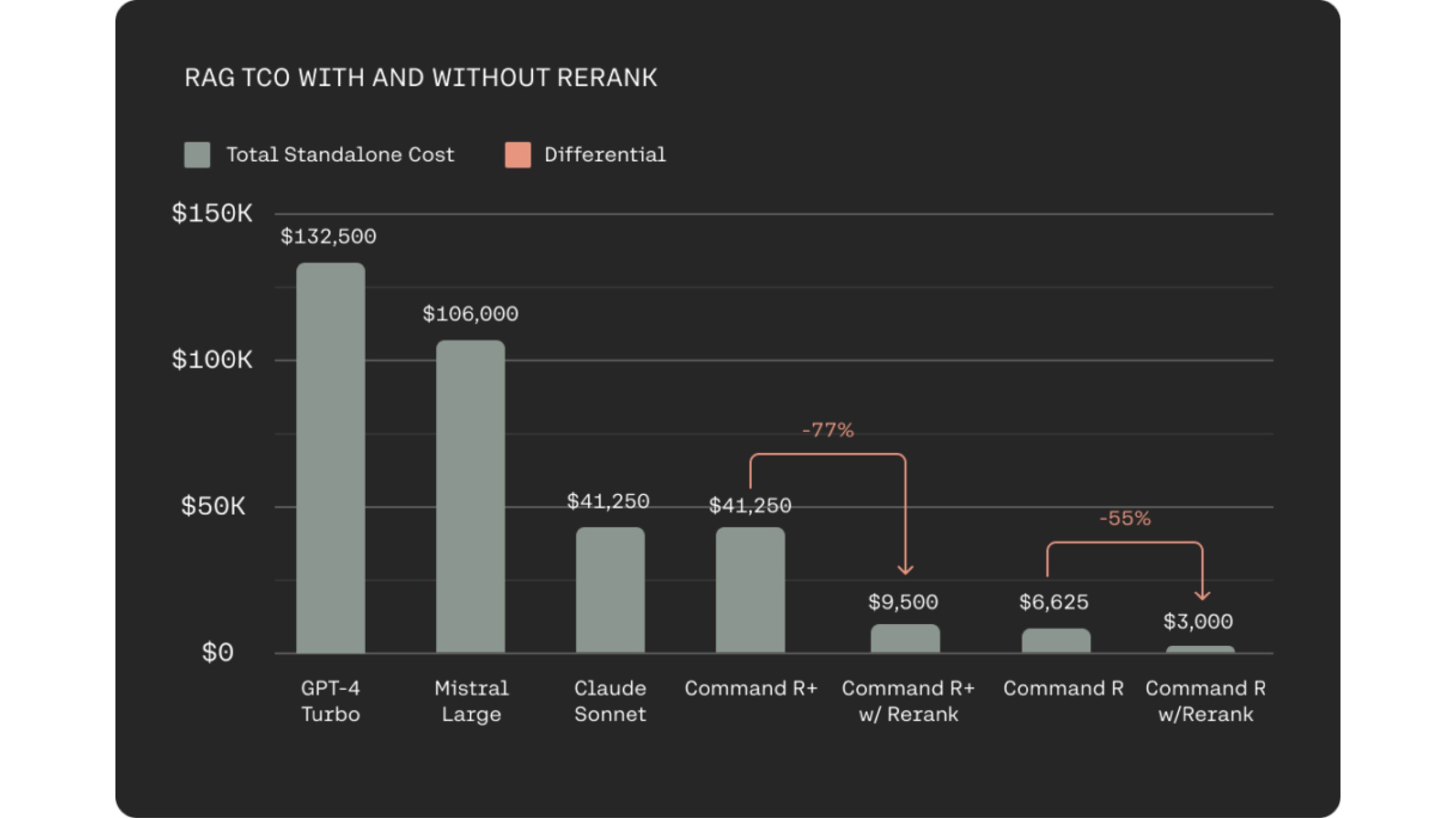

Integrating Rerank 3 with the cost-effective Command R household for RAG methods provides a big discount in Complete Value of Possession (TCO) for customers. That is achieved by way of two key components. Firstly, Rerank 3 facilitates extremely related doc choice, requiring the LLM to course of fewer paperwork for grounded response era. This maintains response accuracy whereas minimizing latency. Secondly, the mixed effectivity of Rerank 3 and Command R fashions results in price reductions of 80-93% in comparison with different generative LLMs out there. In reality, when contemplating the price financial savings from each Rerank 3 and Command R, complete price reductions can surpass 98%.

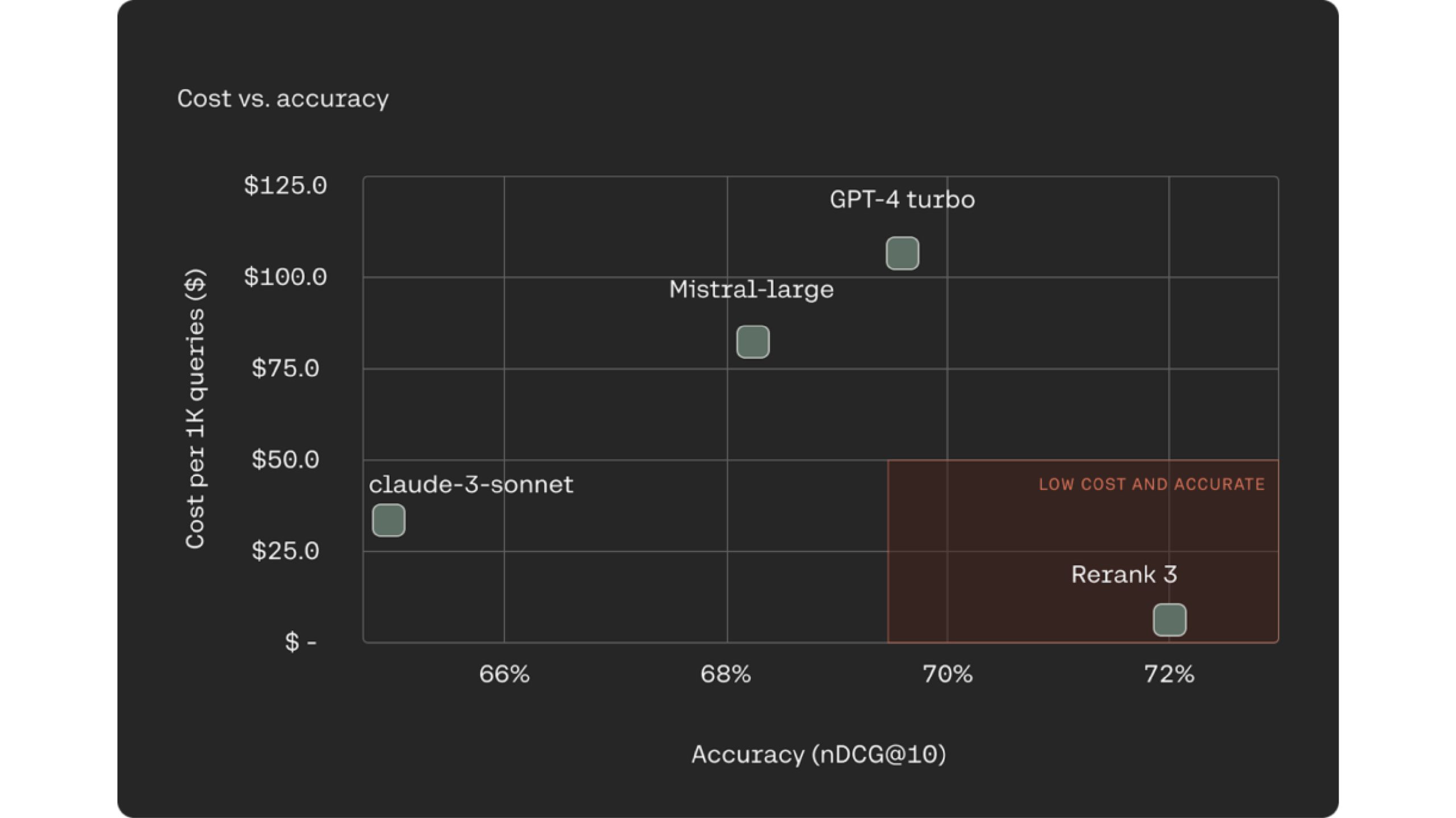

One more and more frequent and well-known method for RAG methods is utilizing LLMs as rerankers for the doc retrieval course of. Rerank 3 outperforms industry-leading LLMs like Claude -3 Sonte, GPT Turbo on rating accuracy whereas being 90-98% inexpensive.

Rerank 3 enhance the accuracy and the standard of the LLM response. It additionally helps in lowering end-to-end TCO. Rerank achieves this by weeding our much less related paperwork, and solely sorting by way of the small subset of related ones to attract solutions.

Conclusion

Rerank 3 is a revolutionary software for enterprise search and RAG methods. It permits excessive accuracy in dealing with advanced knowledge constructions and a number of languages. Rerank 3 minimizes knowledge chunking, lowering latency and complete price of possession. This ends in sooner search outcomes and cost-effective RAG implementations. It integrates with Elasticsearch for improved decision-making and buyer experiences.

You’ll be able to discover many extra such AI instruments and their purposes right here.